Explain to Fix: A Framework to Interpret and Correct DNN Object Detector Predictions

Published in SysML @ NeurIPS, 2018

Abstract

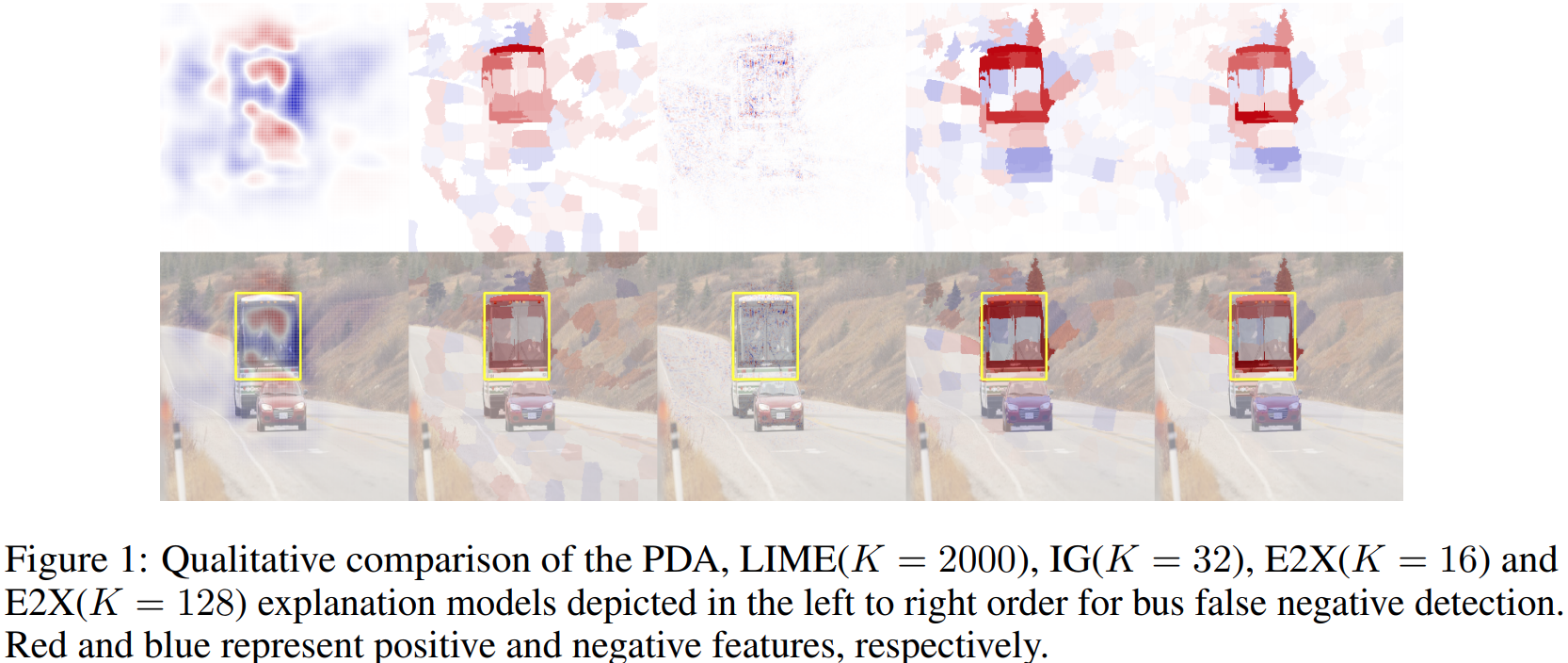

Explaining predictions of deep neural networks (DNNs) is an important and nontrivial task. In this paper, we propose a practical approach to interpret decisions made by a DNN object detector that has fidelity comparable to state-of-the-art methods and sufficient computational efficiency to process large datasets. Our method relies on recent theory and approximates Shapley feature importance values. We qualitatively and quantitatively show that the proposed explanation method can be used to find image features which cause failures in DNN object detection. The developed software tool combined into the “Explain to Fix” (E2X) framework has a factor of 10 higher computational efficiency than prior methods and can be used for cluster processing using graphics processing units (GPUs). Lastly, we propose a potential extension of the E2X framework where the discovered missing features can be added into training dataset to overcome failures after model retraining.

Bibtex

@inproceedings{e2x,

author = {Denis Gudovskiy and Alec Hodgkinson and Takuya Yamaguchi and Yasunori Ishii and Sotaro Tsukizawa},

title = {Explain to Fix: A Framework to Interpret and Correct DNN Object Detector Predictions},

booktitle = {SysML workshop at Advances in Neural Information Processing Systems},

year = {2018}

}